In the era of the experience economy (Gartner), the idea of the prevalence of the user experience over usability and quality in use is becoming more and more insistent.

We are also witnessing an inappropriate use of terminology in which the definitions of usability and user experience (and sometimes even that of quality in use), are adopted interchangeably, considering them equivalent.

Let’s see the following definitions:

Usability (ref. ISO 25000)

The degree to which a product or system can be used by specific users in order to achieve specific objectives with effectiveness, efficiency and satisfaction in a given context of use.

Quality in use (ref ISO 25000)

The degree to which a product or system can be used by specific users to meet their needs to achieve specific objectives effectively, efficiently, satisfactorily and without risk, in a given context of use.

User Experience (ref ISO 9241)

The perceptions and reactions of a person, resulting from the use of a product, system or service. The user experience refers to all emotions, beliefs, preferences, perceptions, physical and psychological responses, behaviours and results that occur before, during and after use.

The relationship between usability and quality in use according to ISO is a close relationship: usability can be specified or measured through sub-features of the quality of the software, or it can be specified and even measured directly by measures that constitute a subset of the quality in use.

The user experience shifts its focus from the software, to the user using the software, to its psychological and moral peculiarities; which induce the reactions that the individual manifests before, during and after the use of the software. Based on these premises, it is clear that if we intend to deal rigorously with the theme of user experience, the techniques and methods coming from software engineering alone are no longer enough satisfactory.

Significant methodological contributions from psychological and social disciplines are then required. Thus, in Clariter’s organization there are specific interdisciplinary teams that deal with issues concerning the user experience and customer experience from the correct angle (see Qalya Sense).

Usability can and must certainly guide the construction of an adequate user experience, but do not be deceived by the overlap (partial or total) of the two perspectives. Usability must refer to specific practical aspects (such as efficiency in carrying out tasks), whilst the user experience focuses on the emotional reactions and pleasure (hedonic) that users are led to develop in experiencing the use of software.

Another great dichotomy between the two areas of observation is given by the predetermination of the objectives: Usability must be related to precise technical requirements, and the user experience must deal with the expectations of users.

For this reason, anticipating and exceeding the wishes and expectations of users is the most effective way to significantly improve the user experience.

All solved? No.

Today the market requires the adoption of high speed and high frequency delivery processes of “agile” releases that do not fit in with the possibility of predetermining structural interventions of usability.

This is why at Clariter we have supplemented a new element that effectively responds to the need for speed and construction of an experience of excellence

We have introduced expected usability.

Expected Usability is based on a model of social interaction aimed at understanding qualitative aspects of software directly related to expected usability.

I.e. the levels of usability that the end user expects to experience in using the software.

Clariter’s interdisciplinary team has developed a Likert-driven model of user interaction along with feedback collection that provides reliable and scientifically backed data to be obtained derived from the sub-features of usability in relation to user expectations.

This would offer the possibility of building (where alternative interventions are not applicable), a usability reference system with a bottom-up approach.

As Clariter focuses this technique on the expectations, (and on the wishes of the users), in order to obtain reliable and statistically significant results, it is imperative to be able to reproduce, on a large scale, samples of users representing, (at different levels of reliability), the population of users for whom the software is planned for. This is a significant factor for mass market applications.

The determination of the extension of these samples of experimenters is based on two macro-dimensions:

The determination of the extension of these samples of experimenters is based on two macro-dimensions:

Probabilistic: margin of error and confidence level considered acceptable in the validation of feedback.

Contextual: breadth of the customer base of reference.

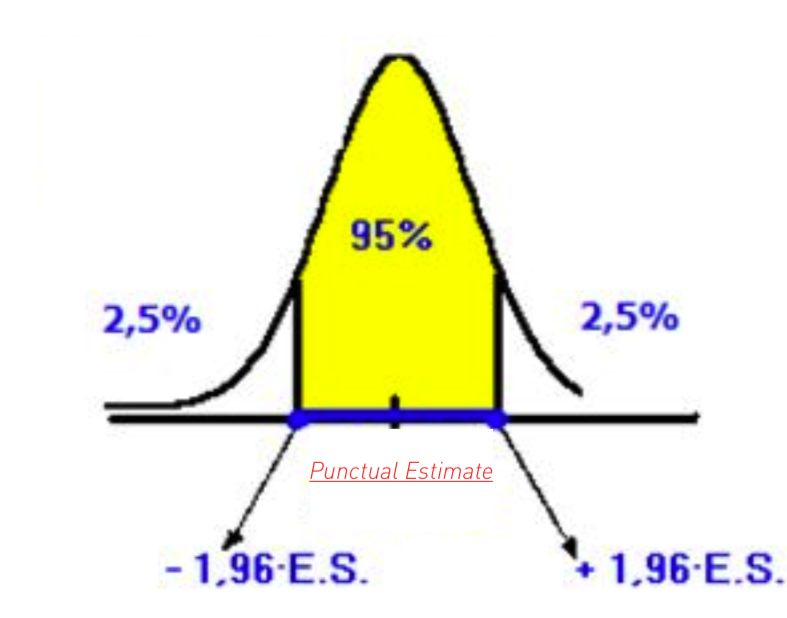

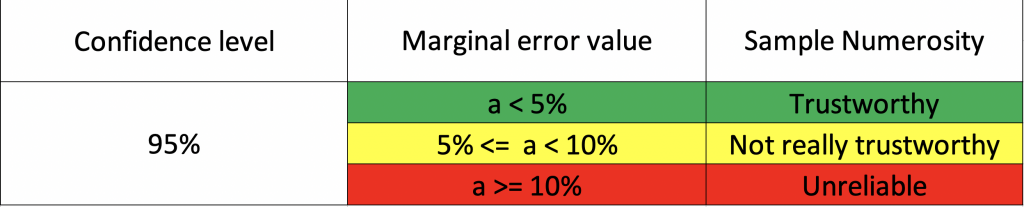

Statistical best practices suggest using a measurement confidence level of at least 95% in order to obtain statistically significant data.

The margin of error allows to go and analyse the precision and accuracy of the data collected. The higher the margin of error, the less accurate and precise the data collected will be. As shown in the adjacent image, case (b) is much more accurate and precise than case (a). In the above image, the radii of the circumferences represent the margin of error that you want to keep on the measurements.

Therefore, in order to obtain a sample with a significant number of measurements compared to a population with a number of N measurements, it is necessary to correctly set the margin of error with respect to the confidence level.

The value of the margin of error is a parameter that must be determined according to the degree of accuracy and precision that you want to obtain, constituting an element of trade-off between cost-risk-benefits.

The challenge in this approach is to precisely constitute statistically significant samples of experimenters. In this sense, the contribution of our Crowdsourcing Best Practice is decisive; being able to count on the largest Italian crowdsourcing community (55,000 members in Italy). Clariter works mainly with Tier 1 customers; specifically customers who, through their services, turn to large numbers of people on the market (millions, hundreds of thousands). So we are continually called upon to constitute very extensive samples of experimenters (hundreds, thousands), in order to increase the degree of confidence to the highest levels and minimise the margin of error.

Agostino Peloso, CTO, Clariter Group

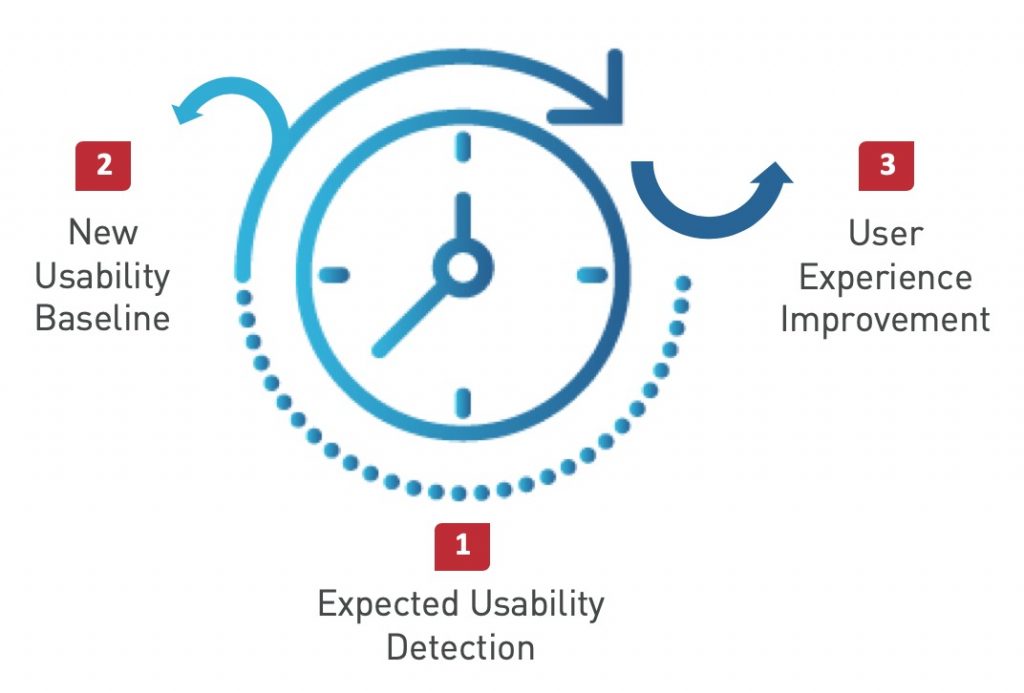

This model is applied continuously and allows Clariter to obtain an iterative cycle of continuous refinement that starts from the detection of expected usability, defines the new baseline of usability, and productively creates significant impacts to improve the user experience.

Learn more about Qalya Sphere.